Do you have lots of log rotation issues, or does your system hangs when lots of logs are generated on the disk, and your system behaves very abruptly? Do you have less space to keep your important deployments jars or wars? Consider using Amazon Simple Storage Service (Amazon S3) to solve these issues.

Storing all the logs, deployment code, and scripts with Amazon’s AWS S3 provides unlimited storage, safe and secure, and quick.

In this tutorial, learn how to Launch an AWS S3 bucket on Amazon using Terraform. Let’s dive in.

Table of Content

- What is the Amazon AWS S3 bucket?

- Prerequisites

- Terraform files and Terraform directory structure

- Building Terraform Configuration files to Create AWS S3 bucket using Terraform

- Uploading the Objects in the AWS S3 bucket

- Conclusion

What is the Amazon AWS S3 bucket?

AWS S3, why it is S3? The name itself tells that it’s a 3 word whose alphabet starts with “S.” The Full form of AWS S3 is a simple storage service. AWS S3 service helps in storing unlimited data safely and efficiently. Everything in the AWS S3 service is an object such as pdf files, zip files, text files, war files, anything. Some of the features of the AWS S3 bucket are below:

- To store the data in AWS S3 bucket you will need to upload the data.

- To keep your AWS S3 bucket secure addthe necessary permissions to IAM role or IAM user.

- AWS S3 buckets have unique name globally that means there will be only 1 bucket throughout different accounts or any regions.

- 100 buckets can be created in any AWS account, post that you need to raise a ticket to Amazon.

- Owner of AWS S3 buckets is specific to AWS account only.

- AWS S3 buckets are created region specific such as us-east-1 , us-east-2 , us-west-1 or us-west-2

- AWS S3 bucket objects are created in AWS S3 in AWS console or using AWS S3 API service.

- AWS S3 buckets can be publicly visible that means anybody on the internet can access it but is recommended to keep the public access blocked for all buckets unless very much required.

Prerequisites

- Ubuntu machine to run terraform command, if you don’t have Ubuntu machine you can create an AWS EC2 instance on AWS account with 4GB RAM and at least 5GB of drive space.

- Terraform Installed on Ubuntu Machine. If you don’t have Terraform installed refer Terraform on Windows Machine / Terraform on Ubuntu Machine

- Ubuntu machine should have IAM role attached with full access to create AWS S3 bucket or administrator permissions.

You may incur a small charge for creating an EC2 instance on Amazon Managed Web Service.

Terraform files and Terraform directory structure

Now that you know what is Amazon Elastic search and Amazon OpenSearch service are. Let’s now dive into Terraform files and Terraform directory structure that will help you write the Terraform configuration files later in this tutorial.

Terraform code, that is, Terraform configuration files, are written in a tree-like structure to ease the overall understanding of code with .tf format or .tf.json or .tfvars format. These configuration files are placed inside the Terraform modules.

Terraform modules are on the top level in the hierarchy where configuration files reside. Terraform modules can further call another child to terraform modules from local directories or anywhere in disk or Terraform Registry.

Terraform contains mainly five files as main.tf , vars.tf , providers.tf , output.tf and terraform.tfvars.

- main.tf – Terraform main.tf file contains the main code where you define which resources you need to build, update or manage.

- vars.tf – Terraform vars.tf file contains the input variables which are customizable and defined inside the main.tf configuration file.

- output.tf : The Terraform output.tf file is the file where you declare what output paraeters you wish to fetch after Terraform has been executed that is after terraform apply command.

- .terraform: This directory contains

cached provider, modules plugins and also contains the last known backend configuration. This is managed by terraform and created after you runterraform initcommand. - terraform.tfvars files contains the values which are required to be passed for variables that are refered in main.tf and actually decalred in vars.tf file.

- providers.tf – The povider.tf is the most important file whrere you define your terraform providers such as terraform aws provider, terraform azure provider etc to authenticate with the cloud provider.

Building Terraform Configuration files to Create AWS S3 bucket using Terraform

Now that you know what are Terraform configurations files look like and how to declare each of them. In this section, you will learn how to build Terraform configuration files to create AWS S3 bucket on the AWS account before running Terraform commands. Let’s get into it.

- Log in to the Ubuntu machine using your favorite SSH client.

- Create a folder in opt directory named terraform-s3-demo and switch to that folder.

mkdir /opt/terraform-s3-demo

cd /opt/terraform-s3-demo

- Create a file named main.tf inside the /opt/terraform-s3-demo directory and copy/paste the below content. The below file creates the below components:

- Creates the AWS S3 bucket in AWS account.

- Provides the access to the AWS S3 bucket.

- Creating encryption keys that will protect the AWS S3 bucket.

# Providing the access to the AWS S3 bucket.

resource "aws_s3_bucket_public_access_block" "publicaccess" {

bucket = aws_s3_bucket.demobucket.id

block_public_acls = false

block_public_policy = false

}

# Creating the encryption key which will encrypt the bucket objects

resource "aws_kms_key" "mykey" {

deletion_window_in_days = "20"

}

# Creating the AWS S3 bucket.

resource "aws_s3_bucket" "demobucket" {

bucket = var.bucket

force_destroy = var.force_destroy

server_side_encryption_configuration {

rule {

apply_server_side_encryption_by_default {

kms_master_key_id = aws_kms_key.mykey.arn

sse_algorithm = "aws:kms"

}

}

}

versioning {

enabled = true

}

lifecycle_rule {

prefix = "log/"

enabled = true

expiration {

date = var.date

}

}

}

- Create one more file named vars.tf inside the /opt/terraform-s3-demo directory and copy/paste below content. This file contains all the variables that are referred in the main.tf configuration file.

variable "bucket" {

type = string

}

variable "force_destroy" {

type = string

}

variable "date" {

type = string

}

- Create one more file provider.tf file inside the /opt/terraform-s3-demo directory and copy/paste below content. The provider.tf file will allows Terraform to connect to the AWS cloud.

provider "aws" {

region = "us-east-2"

}

- Create one more file terraform.tfvars inside the same folder and copy/paste the below content. This file contains the values of the variables that you declared in vars.tf file and refered in main.tf file.

bucket = "terraformdemobucket"

force_destroy = false

date = "2022-01-12"

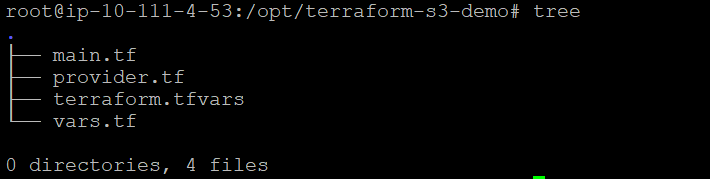

- Now the folder structure of all the files should like below.

- Now your files and code are ready for execution. Initialize the terraform using the terraform init command.

terraform init

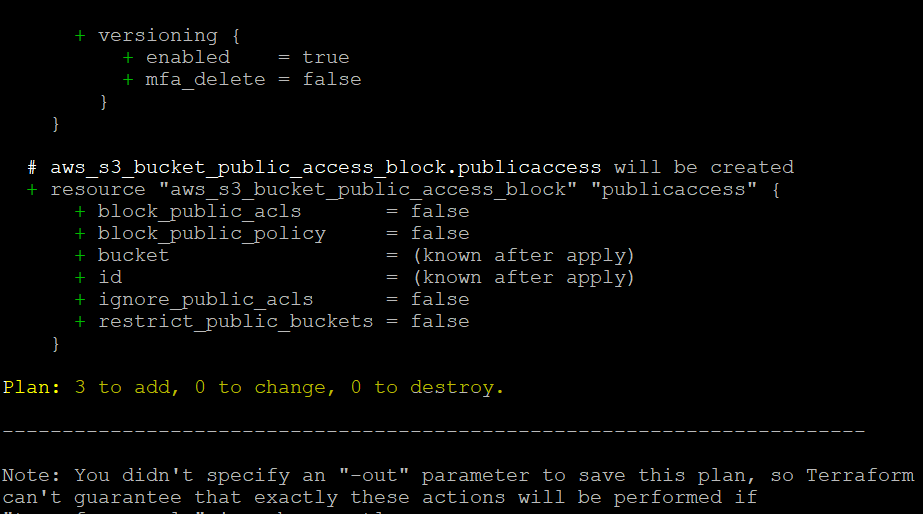

- Terraform initialized successfully , now its time to run the plan command which provides you the details of the deployment. Run terraform plan command to confirm if correct resources is going to provisioned or deleted.

terraform plan

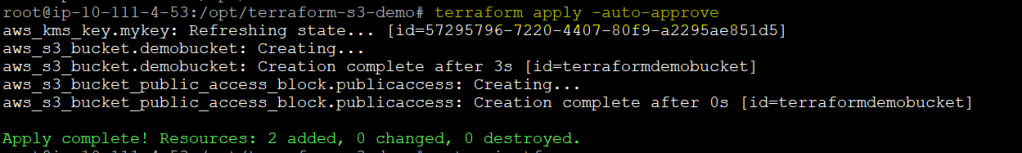

- After verification, now its time to actually deploy the code using terraform apply command.

terraform apply

Terraform commands terraform init→ terraform plan→ terraform apply all executed successfully. But it is important to manually verify the AWS S3 bucket launched in the AWS Management console.

- Open your favorite web browser and navigate to the AWS Management Console and log in.

- While in the Console, click on the search bar at the top, search for ‘S3’, and click on the S3 menu item.

Uploading the Objects in the AWS S3 bucket

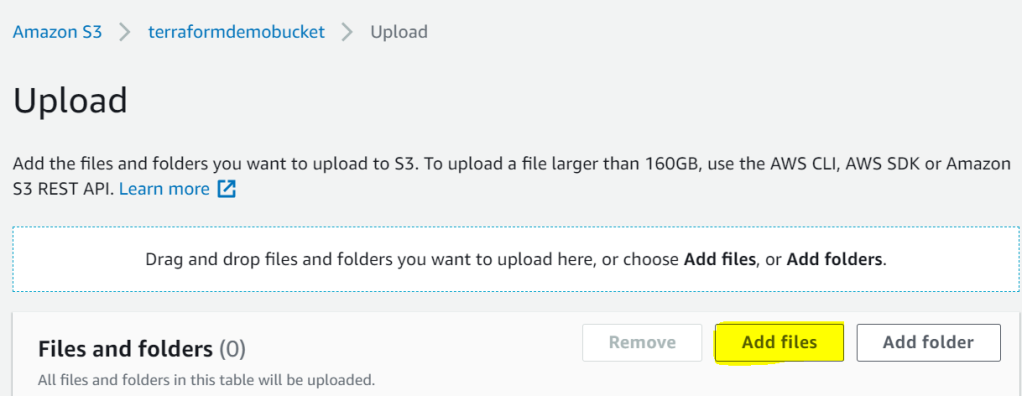

Now that you have the AWS S3 bucket created in the AWS account, which is great, let’s upload a sample text file in the bucket by clicking on the Upload button.

- Now click on Add files button and choose any files that you wish to add in the newly created AWS S3 bucket. This tutorial uses sample.txt file and uploads it.

- As you can see the sample.txt has been uploaded successfully.

Conclusion

In this tutorial, you learned Amazon AWS S3 and how to create an Amazon AWS S3 bucket using Terraform.

Most of your phone and website data is stored on AWS S3, so now what do you plan to store with this newly created AWS bucket.